Research Prototypes

Here are a couple of research prototypes in which implementation I was involved (idea finding/idea refining and/or coding). You can find the appropriate publication(s) right below the screenshots.

3D Image Globe

This prototypes was one of my earliest research work. Its goal is to visualize the image library of a user in a new interesting way. For this it uses 3D as well as sorting the images based on their dominant. Before the final stage that you can see here we went through a couple of configurations that differed in how the images are displayed on the globes surface.

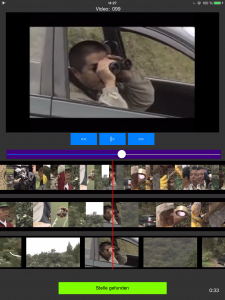

3D Filmstrip

To make it easier for users to get a quick overview of the contents of a video in a intuitive visualization I developed the idea of a 3D filmstrip that can be operated by touch and supports browsing on different levels of detail.

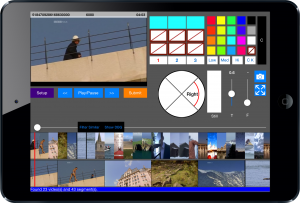

ThumbBrowser

The idea of split keyboards on tablet devices always resonated with me. Therefore, I developed the idea of a touch-based video browser that follows the same principle. Users can operate the browser without the need to lift any of their hands when they hold their tablet in landscape orientation. Everything is accessible with just their thumbs! Furthermore, users can use different zoom levels on the video timeline to have better control in longer videos. Moreover, in its latest iteration it also supports analyzing video content and highlights faces as well as dominant color directly in the vertical timeline.

ImagePane

This small app for smartphones sorts the image library of an user based on color and displays the entire library on one screen by adapting thumbnail sizes accordingly. With a double tap users are able to inspect color regions of interest in a zoomed view.

KNT-Browser

This video browser was developed in cooperation with colleagues in China and supports browsing videos based on so called ‘sub-shots’, a very fine-granular video segmentation method. It visualizes these shots on three synchronized strips that provide different levels on insight into these shots, so that users can choose between fast but coarse and slow but detailed browsing scenarios on-the-fly.

MSV-Browser

As a direct successor we developed the Multi-Stripe Video Browser. It used an improved version of the video segmentation in the KNT-Browser and combined it with additional functionality like filtering for color layouts, keypoint density, photo similarity, shot similarity and motion.

Jessy (Dev. Name)

This system consists out of collaborating mobile clients (tablets) and a server (traditional computer). Its aim is to improve filtering and searching in single videos and video libraries. The clients query the server for shots in accordance to a given search criteria (like color, motion, audio, etc.). All clients are kept informed about the actions of other collaborating participants about already viewed shots, submitted queries and set bookmarks in order to avoid redundant search activities. Query results are visualized by a 3D ring for easy and intuitive inspection.

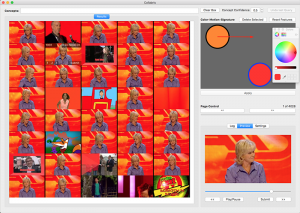

Collabris (Dev. Name)

This desktop application enabled users to navigate through a huge video dataset (in our example 250 hours of videos) by filtering for concepts (like ‘container ship’ or ‘tree’ etc.) as well as sorting shots based on a sketch. The sketch approach used analyzing the shots for color-motion feature signatures. Furthermore, for a given shot it supported finding all similar shots based on their color-motion feature signatures (at very fast execution speeds) as well as showing a shot surrounded by its predecessors and successors via a simple click. At the annual Video Browser Showdown 2016 the system showed an exceptionally good performance!

Copyright © 2026 | MH Purity lite WordPress Theme by MH Themes